Before AI Takes Your Job, Fear Will

The Bank Run on Us

The only thing we have to fear is...fear itself.

—Franklin D. Roosevelt

Fear is the mind-killer.

—Frank Herbert, Dune

We suffer more in imagination than in reality.

—Seneca

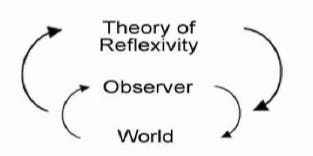

Above: When craze crescendos.

Meta is reportedly planning layoffs that could hit at least 20% of the company. Roughly 15,000 people. The purpose, per Reuters, is to offset the cost of spending $600 billion by 2028 on data centers.

Meta’s stock rose nearly 3% on the news. This was not unexpected; the company is following a playbook that has already worked.

A few weeks earlier, Block fired nearly half its workforce — more than 4,000 people — with CEO Jack Dorsey proclaiming that AI “fundamentally changes what it means to build and run a company.” Block’s shares soared as much as 24% after the announcement.

Fifteen thousand jobs cut at Meta and the stock goes up.

Four thousand jobs slashed at Block and the stock surges 24%.

See a pattern?

And, mind you, these upswings in price are not because these companies are hurting. (Meta posted more than $200 billion in revenue last year. Block’s gross profit was up 24% year over year.) Nor are they due to AI proving that it can do the work those people do.

No, the stocks went up because the market has learned to read layoffs as a buy signal. It’s a sad, mechanical sort of formula: fewer humans + higher margins + vague AI-speak = forward-thinking management.

The companies offered a bloodless human sacrifice and Wall Street bestowed its blessing.

This is neither a technology story nor, more broadly, technological determinism. It’s the social self-hypnosis of an entire economy acting on a conviction that has yet to be truly tested.

In January, Harvard Business Review published a piece that should have chilled things considerably: Companies Are Laying Off Workers Because of AI’s Potential — Not Its Performance. In it, Thomas Davenport and Laks Srinivasan reported their findings after surveying more than 1,000 global executives at the end of 2025.

60% of organizations had already reduced headcount in anticipation of AI’s future impact. Another 29% had slowed hiring for the same reason. Only 2% reported making large layoffs tied to actual AI implementation.1

60% cutting for potential. 2% cutting for performance.

44% said generative AI was the hardest form of AI to assess for economic value. Employees think the productivity gains are far smaller than what executives expect.

Davenport and Srinivasan put it bluntly: companies are “making decisions to reduce headcount before they see the benefits of AI’s impact.”

Though the technological promise hasn’t arrived, the layoffs sure as hell have.

A while back, I wrote an essay titled You Better Believe It: The Reflexive Theory of Everything. It explored George Soros’s theory of reflexivity:

Reflexivity posits that our perceptions do not just reflect the world around us but shape it. [Soros] argues that financial markets are not merely passive mirrors of reality but are instead shaped by the perceptions and beliefs of the participants. This creates a feedback loop where initial beliefs influence actions that change outcomes, thus reinforcing or altering the original beliefs.

I tracked reflexivity from markets to pandemic-era toilet paper:

Recall the sudden spike of panic-buying and hoarding during COVID, a wave of activity caused by fears of shortages that led to, you guessed it, actual shortages.

Across the chaotic variety of daily life, a simple fact holds: Reality is a lot more malleable than we think.

In place of Descartes’s Cogito, ergo sum, I offered Credo, ergo erit. I believe, therefore it will be.

I wrote that essay about Babe Ruth and stock prices and job interviews. I meant it as a meditation on personal belief. Instead, it has metastasized into something with societal implications.

We are psyching ourselves out so badly that the imagined future is beginning to govern the present. Companies are laying people off not because AI has already replaced them, but because executives fear a world in which it soon might.

Executives believe AI might soon substitute for labor; because of this they freeze hiring, cut teams, and redesign organizations around that expectation. Workers, seeing those moves, internalize scarcity and precarity. That anxiety then changes how they work, what they study, what risks they take, and what kinds of companies get built.

The prophecy doesn’t need to be true in order to start becoming true. The belief that AI will replace us — whether it’s real or a mirage — is doing the heavy lifting more than AI itself is as the jury is still very much out on whether these mechanical miracles are up to the task of replacing us wholesale.2

But it doesn’t matter. The belief is already doing the damage. What could be dictates what is, and what is then hardens into what will be.

Banking provides a timeless metaphor for this.

Banks work because of trust. You deposit your money, I deposit mine, and nobody worries about getting it back because we all believe the system is sound. The money isn’t sitting in a vault as the bank lends most of it out and the collective belief holds the structure together.

A bank run starts not when the bank actually fails, but when enough people believe it might fail. (Just ask Silicon Valley Bank.) They act on that belief. The acting creates the failure. The depositor who sees a line forming outside the branch and rushes to withdraw isn’t crazy. Each person in the line is behaving reasonably. The catastrophe is collective.

That’s what’s happening to the labor market.

The “bank” is the accumulated trust that human work has value, institutional knowledge matters, and judgment born from experience can’t be downloaded. The “depositors” are the CEOs, board members, and investors racing to withdraw their faith in that proposition before the guy across the street does it first. To be a CEO right now is to worry about getting AI right by 2026. Their fear has become their employees’ severance package.

The mechanism runs like this:

A belief forms: AI will replace most white-collar work.

The belief triggers layoffs.

The layoffs produce a signal: everyone’s cutting headcount because of AI.

The signal reinforces the belief.

The reinforced belief triggers more cuts.

And so it goes.

In 2025, companies cited AI in more than 55,000 job eliminations, twelve times the number from two years earlier. Amazon alone cut 16,000, Dell has shed 27% of its workforce since 2023, and over 45,000 tech jobs have vanished worldwide since January.

The market rewards each announcement: stocks tick up, narratives harden, and the next CEO takes the cue.

Then reflexivity does something worse. The layoffs hollow out the very capabilities that would make AI unnecessary. You fire the senior engineer who knows why the billing system breaks on Canadian tax calculations. That knowledge leaves with her. You gut the QA team that catches bugs a model would never flag. Your product degrades. Now you need AI to cover the gap you created. Because you burned the bridge behind you.

The prophecy builds the very machine that fulfills it.

I wrote about this dynamic in that reflexivity essay, too. About how narratives consume the people who create them:

We create personal myths and stories about who we are, and these myths gain a life of their own. They shape how others perceive us and how we perceive ourselves. Over time, we begin to conform to these narratives, even when they no longer serve us. The initial belief about who we are becomes a self-fulfilling prophecy, dictating our actions and limiting our potential.

A CEO announces an “AI transformation.” The market applauds. Now he’s the AI CEO. Retreating would shatter the narrative. So the narrative hardens, and the company bends to fit it, even when the evidence says otherwise.

Klarna replaced 40% of its workforce with AI, watched quality collapse, and hired humans back.

IBM claimed AI replaced 8,000 workers; the actual number was a couple hundred, and total headcount actually grew.

Amazon’s CEO initially credited AI for its 14,000 job cuts, then walked it back, citing that it’s “not even really AI-driven, not right now at least. It’s culture.” Meanwhile, Amazon convened an emergency engineering meeting after AI-assisted code changes caused a string of outages that took its site down for six hours.

Cut the people, deploy the AI, watch the AI break things. The loop in miniature.

I end this essay with a warning from my previous discussion of reflexivity:

Take the happy story of the Confident Job Seeker. She believes she is highly qualified and deserving of a job and, therefore, oozes steady confidence during her interview. This wows her interviewer, who proceeds to offer her the job, once again shaping reality in line with her initial belief.

Or, the sad tale of the Nervous Public Speaker. He is convinced that the audience will find his topic excruciatingly dull—so convinced, in fact, that the poor fellow proceeds to read his speech as quickly as possible from his notes, stumbling over his words and not once making eye contact. The audience, picking up on his discomfort, disengages, thus confirming his initial belief.

We are choosing the Nervous Public Speaker’s path. We’re so sure AI will devour the labor market that we’re feeding it the labor market on a veritable platter — firing people at best on spec and vibes, at worst because of a slide deck and all-consuming FOMO.

The toilet paper comparison from that earlier essay was supposed to be funny. Fears of shortage creating an actual, sh*tty shortage leading to pandemic absurdist theater.

Except now it isn’t toilet paper. It’s livelihoods. Institutional knowledge. The accumulated wisdom of people who know things no training dataset contains.

As I wrote, “like gravity, reflexivity is all around us but barely noticed unless we pay it attention. It exerts pressure that brings perception and reality ever closer.”

Like it or not, this is a bank run.

Similar to almost every bank run in history, it is driven not by insolvency but by the fear of insolvency.

A fear so powerful it produces the very outcome it dreads.

Per my about page, White Noise is a work of experimentation. I view it as a sort of thinking aloud, a stress testing of my nascent ideas. Through it, I hope to sharpen my opinions against the whetstone of other people’s feedback, commentary, and input.

If you want to discuss any of the ideas or musings mentioned above or have any books, papers, or links that you think would be interesting to share in a future edition of White Noise, please reach out to me by replying to this email or following me on X.

With sincere gratitude,

Tom

A fairly large number are simply clueless. 9% of respondents aren’t sure about the extent of or reason for AI headcount reductions.

Andrej Karpathy's US Job Market Visualizer is worth a look here: even occupations with high AI exposure scores are more likely to be reshaped than eliminated, and demand for many of them could grow as each worker becomes more productive.

Great essay! Working in technology (software, in particular), it astounds me the degree to which the very decision-makers driving these "AI Initiatives" truly DON'T understand what AI IS, what it does WELL (and especially what it DOESN'T), and where it absolutely needs human co-working to be made even usable.

My "prediction" is that, over time, we will begin to see these well-entrenched companies that made such foundation altering decisions based on the two business myths of the 2020s (Offshoring + AI as Panacea) die the "death by a thousand cuts" via the lower quality of their products and services, critical (digital) infrastructural vulnerabilities, loss of goodwill in the eyes of their primary customer base, and more - all in a self-reinforcing downward spiral.

All that said, it's a great time to be a principled, enterprising individual who enjoys learning and creating, because I feel that many of the "best and brightest" among us will see this maelstrom and opt for a different path. I'm optimistic that we may yet see a "changing of the guard" within our lifetimes.

Excellent analysis 🧐 It must be challenging to have your insights and intelligence at times . Reminds me of Cassandra who was given the gift of prophecy but nobody was able to hear her. Sometimes I wonder if we are all run by our nervous systems more than being conscious ? Thanks for a deeply thoughtful article .