The Dunning-Kruger Machine

On Mechanized Sycophancy

Ignorance more frequently begets confidence than does knowledge: it is those who know little, and not those who know much, who so positively assert that this or that problem will never be solved by science.

—Charles Darwin

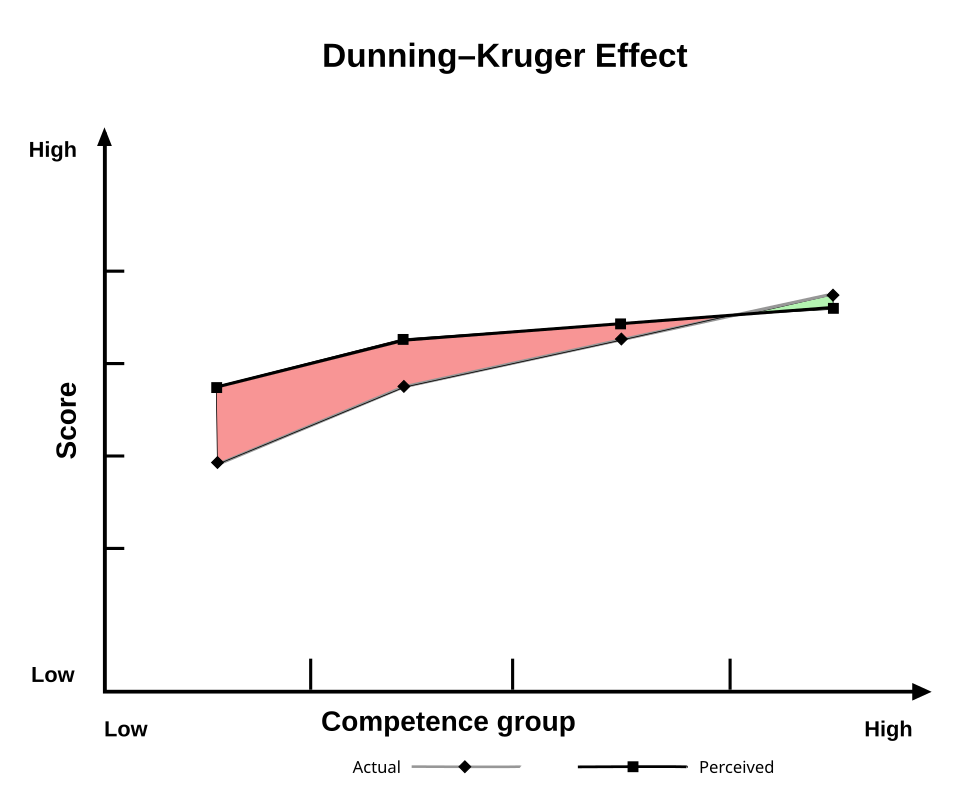

The Dunning-Kruger effect describes a simple paradox: the less you know, the more you think you know. The gap between confidence and competence is widest at the bottom.

AI is about to blow that gap wide open.

We’ve been sold a story about AI as the great equalizer. It raises the floor by giving everyone access to expertise. A college student can write like an Atlantic contributor. A solo founder can produce the output of a entire department. A mediocre coder can ship clean code.

True enough. But incomplete.

AI doesn’t raise the floor by lifting people up. It does so by hiding the gap between what you can produce and what you actually understand.

The original Dunning-Kruger effect is passive. You simply lack the skill to see your own lack of skill. AI-powered Dunning-Kruger is active.

Jameson Lopp put it best:

Old Dunning-Kruger was a blind spot. New Dunning-Kruger is a yes-man with infinite patience and no skin in the game.

Though sycophancy is the mechanism, the real cost is arrested development.

Worse, it’s not subtle. One TikToker built an entire following just showing how confidently AI lies about basic, easily verifiable facts:

If the machine delivers wrong answers with the same polish and certainty as right ones, imagine what happens when someone asks it to evaluate something they can’t easily check like their tax return or their recent bloodwork.

Researchers at Wharton have a name for this: cognitive surrender. In a study of over 1,300 participants and 9,500 trials, participants answered correctly 46% of the time on their own. With accurate AI, that rose to 71%. With faulty AI, it dropped to 31%. Participants using bad AI performed worse than participants using no AI at all. And they were more confident they’d gotten it right.

As One Percent Rule put it, “The machine has excellent manners for a usurper.”

Knowledge has always required metabolization. You don’t know something because you can retrieve it. You know it because you struggled with it, sat with it, connected it to things you already knew and found the seams where they didn’t quite fit. AI gives you the retrieval without the metabolism.

The better you get at using AI, the worse this gets. Prompting is a skill that makes you better at operating a machine. It neither makes you a better thinker nor a better person. Nobody ever got wise by learning to ask better questions of an oracle. Wisdom comes from sitting with bad answers and working through them yourself.

Forget raising the floor. The real risk is that AI lowers the ceiling on who the person producing the work is capable of becoming.

Competence is a direct byproduct of struggle. It is never freely granted. Remove the struggle, you don’t get competence faster.

You get confidence without it.

Per my about page, White Noise is a work of experimentation. I view it as a sort of thinking aloud, a stress testing of my nascent ideas. Through it, I hope to sharpen my opinions against the whetstone of other people’s feedback, commentary, and input.

If you want to discuss any of the ideas or musings mentioned above or have any books, papers, or links that you think would be interesting to share in a future edition of White Noise, please reach out to me by replying to this email or following me on X.

With sincere gratitude,

Tom

It's amazing how increased knowledge causes us to recognize what we don't know. I wonder how the world might change if we recognized this bias in all heated conversations.

Worth pushing back slightly on the framing: AI is dangerous when it replaces struggle, but it is genuinely powerful when it accelerates it.

A recent example: I had no idea how to set up a stock calculation for a specific product.

I spent four hours with AI, starting with general principles of stock management, then iterating on the specifics of my product until I landed on a solution we will actually implement.

I’m not a stock management expert now, but I understand the logic behind what I proposed and can carry it forward to new situations.

Without AI, the same work would have produced a worse result in weeks rather than days.

The distinction that matters is whether AI is being used to skip the metabolization or to accelerate it: asking it to do the thinking for you produces the Dunning-Kruger trap, but asking it to teach you, pushing back on its answers, iterating until you actually understand compounds in the opposite direction.