When LLMs Wear Cashmere

How the Good Will Hunting Bar Scene Explains AI Perfectly

Be careful whom you associate with. It is human to imitate the habits of those with whom we interact. We inadvertently adopt their interests, their opinions, their values, and their habit of interpreting events.

—Epictetus

Above: Man versus machine.

Perhaps no scene in the history of film is more wonderfully satisfying than the Good Will Hunting bar scene.

The social physics are perfect. The dialogue is sharp. The comeuppance is oh-so delicious.

It starts with the oldest story in the book: a guy and a girl in a dim bar, lit by whatever courage can be borrowed from a beer.

Despite this backdrop, it’s not about romance at all. Nor is it about history or Harvard, class or creed.

It’s about borrowed intelligence pretending to be a self—plagiarism, personified.

Press play:

In 2026, the Ponytail Guy is no longer just a character in a movie. He is the default voice of our digital lives. He is AI.

And Will Hunting—the Southie janitor who steps in to dismantle him—is the last bastion of something we are actively outsourcing: humanity.

The Algorithm of Mediocrity

Rewatch the scene and you realize Clark (the Ponytail Guy) operates exactly like a Large Language Model. He has ingested a massive dataset—Vickers, Gordon Wood, the pre-revolutionary utopia—and he is predicting the next likely sentence to sound intelligent.

He isn’t thinking; he’s compiling—running a script designed to impress, to simulate wisdom without the messiness of actually earning it.

Will Hunting exposes him with a single, devastating insight:

Yeah I read that too. Were you gonna plagiarize the whole thing for us—you have any thoughts of—of your own on this matter? Or do—is that your thing, you come into a bar, you read some obscure passage and then you pretend, you pawn it off as your own—your own idea just to impress some girls, embarrass my friend?

Clark has no original thoughts. He has probabilistic outputs. He’s no more than a server farm in a nice sweater.1

To be human is to be Will. It is to be messy, defensive, brilliant, and grounded in the terrifying reality of lived experience.

He has done the work required to have an opinion: the reading, thinking, writing, debating, revisiting, and revising. He knows the context, the contradictions, and the pain behind the facts. Because of this, he isn’t tethered to the average ideas of the machine.

The Sea of Sameness

We are currently drowning in a sea of Clarks. When we use AI to write our emails, draft our tweets, or polish our essays, we are voluntarily adopting the voice of the Ponytail Guy. We are smoothing out our edges to sound like the decidedly average consensus of the internet.

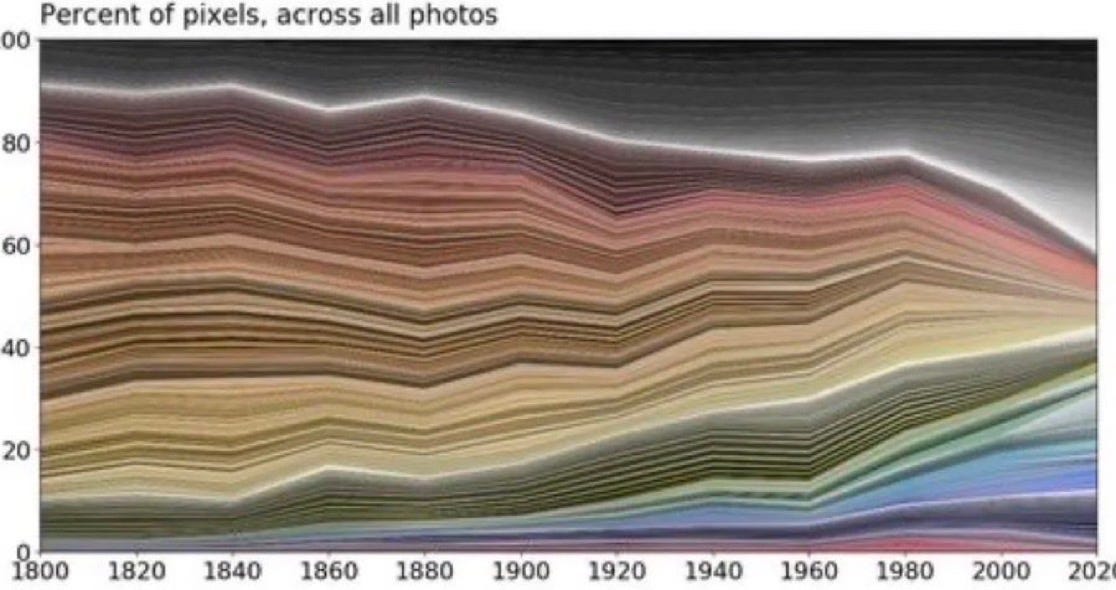

As I wrote in Stop Saying Vibes, this only furthers our “cultural slide into mush…the linguistic version of this image charting dominant colors in photography over time:

Everything used to be bold, sharp, high-contrast, and then, as history lurched forward, the palette slid into grays, taupes, rental-apartment sage greens, and influencer-approved oatmeal.”

Lulu Cheng Meservey puts it plainly in a recent article:

In this world, the real has never been more precious, refreshing, special, and rare. We need real people, building real things that actually matter, through real discipline and effort, with real outcomes in the real world…

In 2026, narrative alpha will come from doing real things. That means…Showing up as real humans, with real flaws and foibles, instead of ultra-polished personas following AI scripts.

We are fast becoming a monoculture propped up by AI tics, by an algorithmic amalgamation of “delve,” “tapestry,” and “landscape,” safe in the knowledge that we sound smart, but forgetting that we sound exactly like everyone else:

On a long enough time horizon, when the crutch has become a prosthetic and fused with us completely, we feeble-minded folk will sound like the very stochastic parrots we once laughed at—boasting of our originality while using the very same words:

The Duty to Fail

In a 2011 commencement address at the University of Pennsylvania, Denzel Washington said: “If you don’t fail, you’re not even trying.”

He meant this literally—you must take risks to achieve—but in the age of AI, it takes on a new weight. By offloading our thinking to a machine, we are insulating ourselves from the possibility of failure.

In short, AI is a failure-avoidance machine.

It won’t make a typo. It won’t say something “cringe.” It won’t ramble or stammer or deliver a speech full of ummms or uhhhs or likes or you knows.

It will keep you polished.

But polish is not the same as virtue. Polish is, quite literally, a thin veneer of protection.

And a life spent protecting yourself from looking stupid is not a life that makes anything new or does anything remarkable.

If you don’t fail, you aren’t just playing it safe; you are being derelict in your duty as a human being.

As a beautiful, integral piece of creation made in God’s image and likeness, you have a responsibility to attempt the heavy lifting yourself. To use AI is to let the machine do the spiritual workout for you.

Cue Marianne Williamson:

Our deepest fear is not that we are inadequate.

Our deepest fear is that we are powerful beyond measure.

It is our light, not our darkness that most frightens us…

You are a child of God.

Your playing small does not serve the world.

There’s nothing enlightened about shrinking so that other people won’t feel insecure around you.

When we hide behind the generated perfection of an algorithm, we are dimming that light. We are “playing small.”

We are refusing to make manifest the glory within us because we are afraid of looking stupid or unpolished or, to use another word, human.

And we were all made for something more than to devolve into a perfectly-edited consensus of everyone else’s thoughts.

Be the Mess

Serendipitously, while editing this for the umpteenth time, Vance Joy’s soothing “Mess is Mine” came on via shuffle. And that’s exactly it.

What I write might not be any good. It might not elicit a laugh or prompt a tear. Hell, it might even be an incoherent mess, but at least it’s mine.

At least it has fingerprints.

At least I won’t be unoriginal.

And that’s more than enough for me.

Per my about page, White Noise is a work of experimentation. I view it as a sort of thinking aloud, a stress testing of my nascent ideas. Through it, I hope to sharpen my opinions against the whetstone of other people’s feedback, commentary, and input.

If you want to discuss any of the ideas or musings mentioned above or have any books, papers, or links that you think would be interesting to share in a future edition of White Noise, please reach out to me by replying to this email or following me on X.

With sincere gratitude,

Tom

Even when taken to task, Ponytail Guy acts exactly as AI would. He appeals to Damon’s humanity by saying "man," de-escalates the situation with "it’s cool," and gently retreats from the answer he just confidently provided.

Hi Tom. I’ve been following you for a while and I wanted to publicly congratulate you because I consider you a writer of rare intelligence and ability. The article is wonderful, as usual. I’d like to offer my humble opinion on the topic.

Human beings can also be seen as machines, and every machine, based on what it can give, produces on average a certain kind of behavior. Therefore, mediocrity is not something to be blamed in itself; it is part of the very nature of things. A person gives what they can give according to how they were designed by their sociocultural environment and by biological factors—there’s little that can be done about that. A person can give more only if, by pure chance, they encounter another system (another person) that functions at a higher level, and contact with it elevates them.

Although there is some room for the will to escape mediocrity by striving to give one’s best, the margins for maneuver are very limited… I know, it’s sad. The personal development gurus won’t agree, but it’s better to keep one’s feet on the ground, as I do, than to delude oneself with things that don’t exist. Even the idea of true free will is material for the deluded. For this reason, we will never truly know how things will turn out.

Interaction with artificial intelligence could also lead us to elevate ourselves in some way, even though, like you, I look with suspicion at the idea of not making the effort to think autonomously and not risking falling. The truth is that no one knows how it will end, and the most likely outcome is that what happens will have nothing to do with what everyone predicts.

The biggest paradox I see right now is that, to use artificial intelligence, the fundamental prerequisite is being intelligent. But here a problem clearly arises: if using artificial intelligence makes us less intelligent, what happens then?

The reality I see is a bright future. Let’s be honest: how much intelligence really circulates in the world? Not to mention wisdom… my God, better not even think about it. Most people who, instead of thinking, usually just fart, will at least have an intelligent “friend” to interact with. Maybe they’ll be able to slightly correct the way they think, speak, or act, and make more intelligent choices—taking informed decisions instead of relying on beliefs, mythical gods, and various influences. They will trust their personal friend, but fortunately it won’t be a fool like the ones they usually follow in their social circle; it will truly be something intelligent.

And let’s think about politicians, who deal every day with decisions involving complex systems. Instead of governing based on their “I believe,” “I think” (you know that politicians never say anything that is scientifically grounded or based on real experience—they govern based on the illusion of knowing something), at least they will be able to make informed decisions supported by an intelligence that, for the first time in history, will be able to read this complexity and instantly understand the external consequences of local choices so dear to politicians.

Just imagine a politician who sees oil in a country and whose first thought is a caveman thought: drill, drill… conquer… steal… kill… At least there will be someone who tells him: friend, wait a moment—the best choice might not be the same one used in prehistoric times.

That said, let’s stay calm. I think a future of widespread intelligence awaits us all. Whether it will be purely artificial or a true mix of values, we cannot know.

Tom- you know we have kindred spirits and I have always followed your posts with optimistic curiosity. But this might be your most powerful message! I tell my kids that the tool of LLM is incredibly powerful, but please don’t get sucked into everything you view. Take it all with a thought process to learn, but question, “is this actually good?”…not sure they listen but the slow drip of reality is vital!!